To understand Nvidia’s prospects in 2026, we need to look back at the company’s most important moves in 2025 and estimate how they will fare in 2026.

Nvidia (NVDA) surprised, and even a little confused, many investors and analysts with a deal at the end of the year. The reason for the confusion is that when CNBC broke the news, it was reported that Nvidia would acquire Groq for about $20 billion, but when Groq was officially announced, it turned out that the deal was a non-exclusive licensing deal and a competition for talent, not a company acquisition.

Here are the key questions about the deal raised by Bank of America analyst Vivek Arya in a research note shared with TheStreet:

-

What does Groq mean by “non-exclusive licensing agreement”?

-

Could Nvidia develop this technology itself?

-

Can Groq Cloud, still an independent company, undercut Nvidia’s LPU-based capabilities?

Are services cheaper?

Despite these doubts and calling the deal surprising, Arya also said the deal was strategic and complementary. He reiterated a buy rating and $275 price target on Nvidia stock.

To understand the Groq deal, we need to delve deeper into what Groq technology is and what the tech industry’s dominant strategy has evolved into.

Groq’s main business is GroqCloud, an artificial intelligence inference platform. AI inference is the process of generating responses from a trained AI model.

Groq gives developers a way to run AI models on corporate hardware and get responses quickly and at a competitive price. The reason relatively small startups are able to compete with large enterprises and offer competitive prices for AI inference is because of their hardware.

The company’s inference platform uses application-specific integrated circuit (ASIC) chips called language processing units (LPUs), which are developed and optimized for LLM inference.

GPUs can be used for many different computations, including gaming, 3D rendering, crypto mining, AI training, and AI inference, but Groq’s LPU chip has only one purpose – AI inference.

This means they possess extremely sharp attention, which makes them many times more efficient at performing specific tasks.

When Gemini 3 launched, Google claimed that it had done 100% training on tensor processing units (TPUs), and of course, it also did inference on TPUs. You may have guessed it right, TPU is also an ASIC chip.

Here’s what Gemini Nvidia posted on X (formerly Twitter):

“We are delighted with Google’s success – they have made huge strides in AI and we will continue to supply Google. NVIDIA is a generation ahead of the industry – it is the only platform that can run every AI model and execute wherever computation is done. NVIDIA offers better performance, versatility and fungibility than ASICs, which are designed for a specific AI framework or function.”

RELATED: Bank of America updates Palantir stock forecast after private meeting

The fact that Nvidia felt the need to mention Gemini in the post suggests that the company is worried about the competitiveness of well-designed ASIC chips, and now we have proof.

Groq’s announcement about the deal with Nvidia said: “As part of the agreement, Groq founder Jonathan Ross, Groq president Sunny Madra and other members of the Groq team will join Nvidia to help advance and expand the licensed technology.”

Can you guess what Jonathan Ross’s job is at Google? Of course, he was one of the designers of Google’s first-generation TPU. Nvidia’s decision to license Groq’s LPU technology stack and “acquire” its talented team is a silent admission that ASIC chips represent the future of artificial intelligence.

Non-exclusive licensing agreements are the only way to avoid government scrutiny. The approach here is a combination of Apple and Meta strategies. Apple produces custom ARM chips and has a non-exclusive licensing agreement with ARM.

But the reason why Apple chips are great is that only Apple can attract talent; so far, rival ARM chips have not been able to catch up.

Nvidia secured top talent in the deal by mirroring Meta’s move (investment in Scale AI). It turns out that the entire deal with Scale AI was more about getting Alexander Wang to lead Meta’s superintelligence division than investing in Scale AI.

This is the new dominant strategy in technology, where talent is more valuable than the entire company.

Assuming there’s nothing special about Nvidia’s contract with Groq, the non-exclusive license should mean that other companies can license the LPU design and build similar LPUs. Nvidia is content because it’s not acquiring talent, and it’s betting they won’t be able to build anything impressive just from licenses.

Arya’s second question is whether Nvidia can build the LPU itself, which seems like a redundant question. Even if the company were able to develop such a chip (assuming there are no patent issues), it wouldn’t be able to do so in the ideal timeframe.

This emphasizes my argument: Nvidia started worrying about TPU a little late.

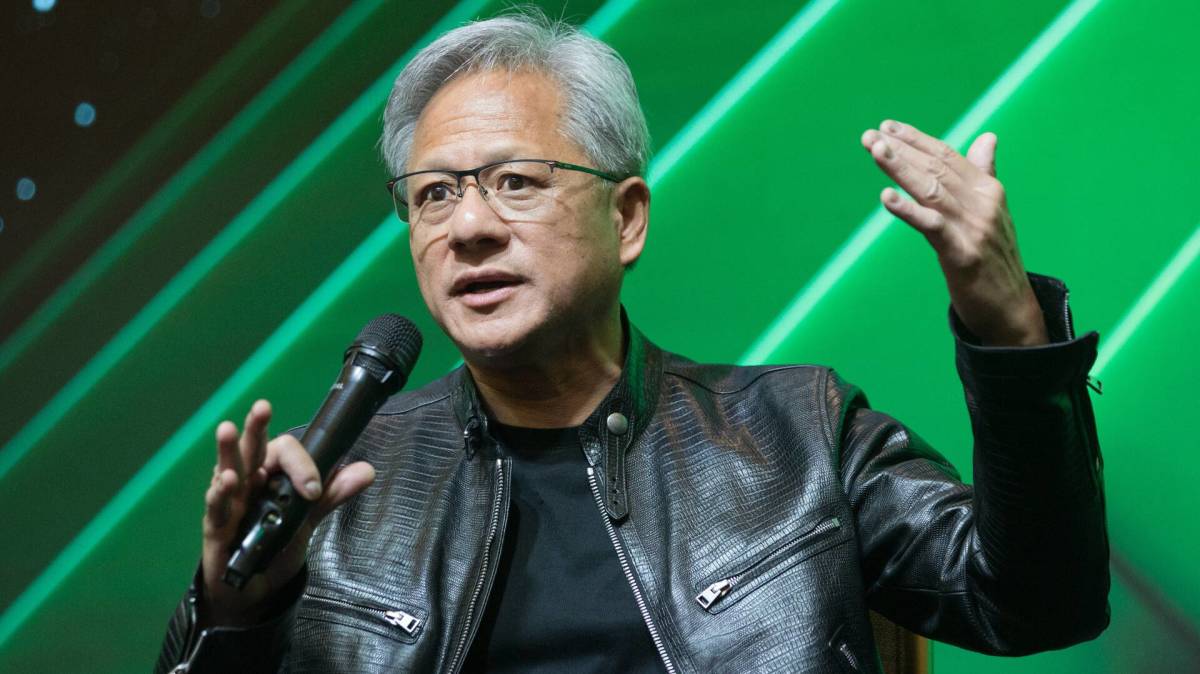

To answer Arya’s third question, we first need to determine Nvidia’s strategy for LPU. In an email to employees obtained by CNBC, Nvidia CEO Jensen Huang wrote:

“We plan to integrate Groq’s low-latency processors into the NVIDIA AI Factory architecture, extending the platform to serve a wider range of AI inference and real-time workloads.”

Jen-Hsun Huang has been promoting the idea of artificial intelligence factories for some time, and he appears to be increasingly focused on it. This new LPU plan finally made the whole thing clear to me, and the sudden switch to inference was very interesting and enlightening.

After the hype surrounding the pursuit of general artificial intelligence, or superintelligence, the market is turning toward inference. You might think that training capabilities would be crucial as long as we haven’t reached amazing life-changing technologies.

More NVIDIA:

The problem is that LLM has peaked, and while the shift to inference and “AI factories” is Huang’s secret focus, LPUs are only one piece of the puzzle. Nvidia recently released the Nvidia Nemotron 3 series of open models, data and libraries. These models are key components of the latest pivots, namely Artificial Intelligence Factory and Sovereign Artificial Intelligence.

Data ownership, privacy, and model fine-tuning are some of the reasons any company or organization capable of having sovereign AI would want it. This is why the open source model (at least the open source model) is the future, just like ASIC chips.

According to Wired, we can see a slow but continued shift in this direction, as Qwen has been used in hundreds of academic papers published at NeurIPS, a top artificial intelligence conference.

“A lot of scientists are using Qwen because it’s the best open weight model,” Andy Konwinski, co-founder of the Laude Institute, a nonprofit dedicated to advocating for an open American model, told Wired.

Huang’s plan appears to be a complete sovereign AI solution that provides the fastest inference with the lowest power consumption offered by the LPU, combined with a GPU for training and Nemotron as an entry software platform.

Arya also wrote in his notes: “We envision a future NVDA platform where GPUs and LPUs coexist in the rack, seamlessly connected to NVDA’s NVLInk network fabric.”

I want to firmly say that this idea is wrong.

Related: Bank of America resets Micron Technology stock price target, rating

The LPU has a completely different memory model, based on so-called SRAM memory, which is very expensive and very fast. According to Groq, its LPUs are directly connected via a quasi-synchronous protocol, aligning hundreds of chips to act as a single core.

Groq calls its chip-to-chip interconnect technology RealScale. LPUs have another key difference compared to GPUs: they are deterministic. These architectural differences mean that LPU and GPU chips cannot work together to run the same software (perform inference), and putting them in the same rack will only cause problems and complicate things.

Each LPU has very little memory; running large LLM models requires a large number of LPUs. This will be the determining factor in how many rack LPUs are required to run the model.

It’s certainly possible that Nvidia could develop a significantly different LPU than Groq to allow mixing with GPUs in the same rack, but in that case they would take more time to develop. I think that for Huang Renxun’s artificial intelligence factory planning, development speed is the first priority.

In any case, considering that chip design takes at least a year, it is extremely unlikely that Nvidia’s LPU will be launched in 2026. The Groq transaction and inference pivot tells us that we need to pay very close attention to what happens with OpenAI.

On December 19, Reuters reported that SoftBank Group was racing to complete its $22.5 billion financing commitment to OpenAI. Considering SoftBank has committed to investing the money by the end of the year, they’re very close.

Waiting until the last minute to follow up can make the company look unsure if it’s a good investment.

According to a Reuters report on December 2, Nvidia and OpenAI’s agreement to invest up to $100 billion in the startup has not yet been finalized. According to Forbes, OpenAI does not expect to have positive cash flow until 2030.

It’s easy to see why Nvidia is in no rush to finalize a deal with OpenAI. The best-case scenario for OpenAI is that Nvidia is waiting for it to IPO first, and the worst-case scenario is of course no deal.

OpenAI’s failure to secure more investment will have a domino effect, with Oracle, Nvidia and Microsoft being hurt the most. Nvidia’s AI Factory strategy is a good way for the company to protect itself from OpenAI’s reliance as a customer.

According to leaked information, Intel Serpent Lake is the first chip to feature an integrated Nvidia GPU and it won’t launch before 2027. Even so, 2028 is more likely, PC GAMER reported.

Bank of America’s latest research report is from November and includes a forecast for Nvidia. Arya and his team expect Nvidia’s fiscal 2026 revenue to be $212.83 billion and non-GAAP earnings per share to be $4.66. Nvidia’s third-quarter gaming revenue missed consensus estimates by 4%. According to PC GAMER, there are rumors that Nvidia plans to cut gaming GPU production by as much as 40% in 2026 due to VRAM supply issues.

We can foresee that gaming revenue could easily fall short of consensus again as the memory industry goes all-in on AI and soaring RAM prices will have the side effect of reduced gaming PC sales and manufacturing.

A similar story was evident in the automotive space, with Nvidia’s third-quarter results missing consensus estimates by 6%. The company’s guidance for the fourth quarter was $592 million, significantly below the consensus estimate of $700 million.

The company’s fourth-quarter guidance for the professional visualization segment was optimistic at $760 million, above the consensus estimate of $643 million. OEM, which includes the outlook for the cryptocurrency space in Q4, was close to consensus at $174 million, compared with $172 million in the year-ago quarter.

The non-data center revenue segment pales in comparison to Nvidia’s forecast of $51.2 billion and the consensus estimate of $57 billion for the fourth quarter. Revenue in the non-data center segment will continue to shrink as the company focuses more on its highest-margin products.

The launch of the Vera Rubin series will be a defining moment in 2026, because if these chips deliver the promised performance and efficiency gains, it will remove any doubts about Nvidia’s supremacy.

Next year will be Nvidia’s year, especially if rumors reported by Android Headlines that Google has failed to secure HBM shipments of its TPUs are true.

I wouldn’t be surprised if this rumor is true, as Huang is always a few steps ahead of the competition, except for that moment when he underestimated Google’s TPU.

RELATED: Veteran Analyst Candid on Intel Stock

This article was originally published by TheStreet on December 28, 2025, and first appeared in the Investment section. Click here to add TheStreet as your preferred source.